[ Review Disclosure: I’ve owned Axle.ai software since 2014. ]

[ Review Disclosure: I’ve owned Axle.ai software since 2014. ]

Today we are awash in media: stills, video and audio clips are proliferating like rabbits across our storage. The challenge with this growth is finding exactly the image we need when we need it. Making these searches even more complex is that we often need to find clips based on their content, not technical specs.

However, while the quantity of our media has exploded, the software we use to find that media has virtually disappeared. Two years ago, more than a dozen high-end media asset managers were available. Today, through acquisitions, ten of those companies are gone.

NOTE: Kyno and Levels Beyond were acquired by Signiant, CatDV was acquired by Quantum, Masstech and Front Porch DIVA were acquired by Telestream, Primestream was acquired by Ross, Cantemo was acquired by Codemill, Iconik and fTrack were acquired by Backlight, and Frame.io was acquired by Adobe.

If you are a small-to-medium-sized facility, your media management options are now extremely limited. Fortunately, there’s Axle.ai.

Years ago, I inherited tens of thousands of physical objects, videos, photos, slides and audio recordings of family history and events dating back over 170 years. My goal was to get these scanned, cataloged and made available to family and friends. Managing all this media is the role of a Digital Asset Manager (DAM). Because of this, several years ago I purchased Axle.ai, which has since undergone a series of updates, most recently earlier this year.

I like Axle a lot, even though it has some interface quirks that drive me nuts. Let me give you a detailed tour.

NOTE: At the end of this review is an interview with Sam Bogoch, CEO of Axle.ai.

EXECUTIVE SUMMARY

Axle is software which enables a web server on a user-supplied computer to provide a multi-user digital media asset manager (DAM) to organize and deliver media assets to end users via a local network or the web. While it can be used by a single editor, it is designed to support small to medium sized workgroups. Current customers include news, sports, social media, houses of worship, education and media (craft) editing facilities. And me.

While the system speed of the software is amazingly fast, even with over 100,000 assets, real-world performance will depend upon the number of clients using the system at the same time, the speed of the connection connecting Axle to the network, and the horsepower of the server hardware itself. For small groups, a Mac mini is fine. For larger groups, moving the server to a Mac Studio computer or Linux server may make more sense. While the Axle computer can be used for other tasks, it is generally a good idea to dedicate a computer as the Axle server.

NOTE: Axle.ai can support up to 10 million individual assets.

“Whether your media is stored on premise (NAS or direct attached drives), in the cloud, or a mix of both, axle ai automatically catalogs your media where you’ve already saved it. No change to your file names or folder structures, and no clunky “check in” processes required.

“axle ai generates H.264 proxy files for all your media files, and previews of your image and audio files, too. Anyone on your team can preview these assets in their browsers, on laptops, phones or iPads. (Axle website)

Axle.ai automatically generates metadata (labels) based on technical specs, plus supports customize metadata fields. It uses “Elastic Search” technology (similar to doing a Google search) so searches are performed using English, rather than arcane search terms.

While optimized for video, it also supports still image and audio assets, creating previews optimized for web browser viewing automatically in the background. Axle.ai also offers an extension panel for Adobe Premiere Pro supporting media searches directly in Premiere.

Like any database, the value of Axle depends upon what you put into it. For those just getting started, hiring a freelance librarian or archivist to plan how to organize and categorize files would be a wise investment.

If you need a faster, better, easier way to store, organize and find media assets to share locally or remotely with your team, Axle.ai is an excellent choice.

Developer: Axle.ai

Website: https://axle.ai/features/

Price: $50/month/user plus add-ons, full purchase options are also available

HARDWARE REQUIREMENTS

The overview is that Axle’s server software is installed on a dedicated Mac or Linux computer, or in a virtual machine in the cloud. Clients access the server via any web browser, including mobile devices, provided they are on the same network as the Axle server. Media is stored separately from the Axle server, generally on a network volume. Axle then catalogs and tracks this media, provided the Axle server has access to the network volumes.

NOTE: Axle can provide remote (through the web) access to the Axle server via Axle’s Reverse Proxy service.

The more users that need to access Axle, the faster the server computer needs to be. In my case, I have 3-5 users, so the Axle servers runs quite well on a 2018 Mac mini with 8 GB of RAM and an Intel i7. More RAM would support more users, but 8 GB works fine for me.

NOTE: Axle creates proxies in the background automatically whenever new media is added to the storage pool. Proxy movies are .MP4, audio files are .MP3 and still images are .JPG. These files are called “previews.”

[ Click to view larger image. ]

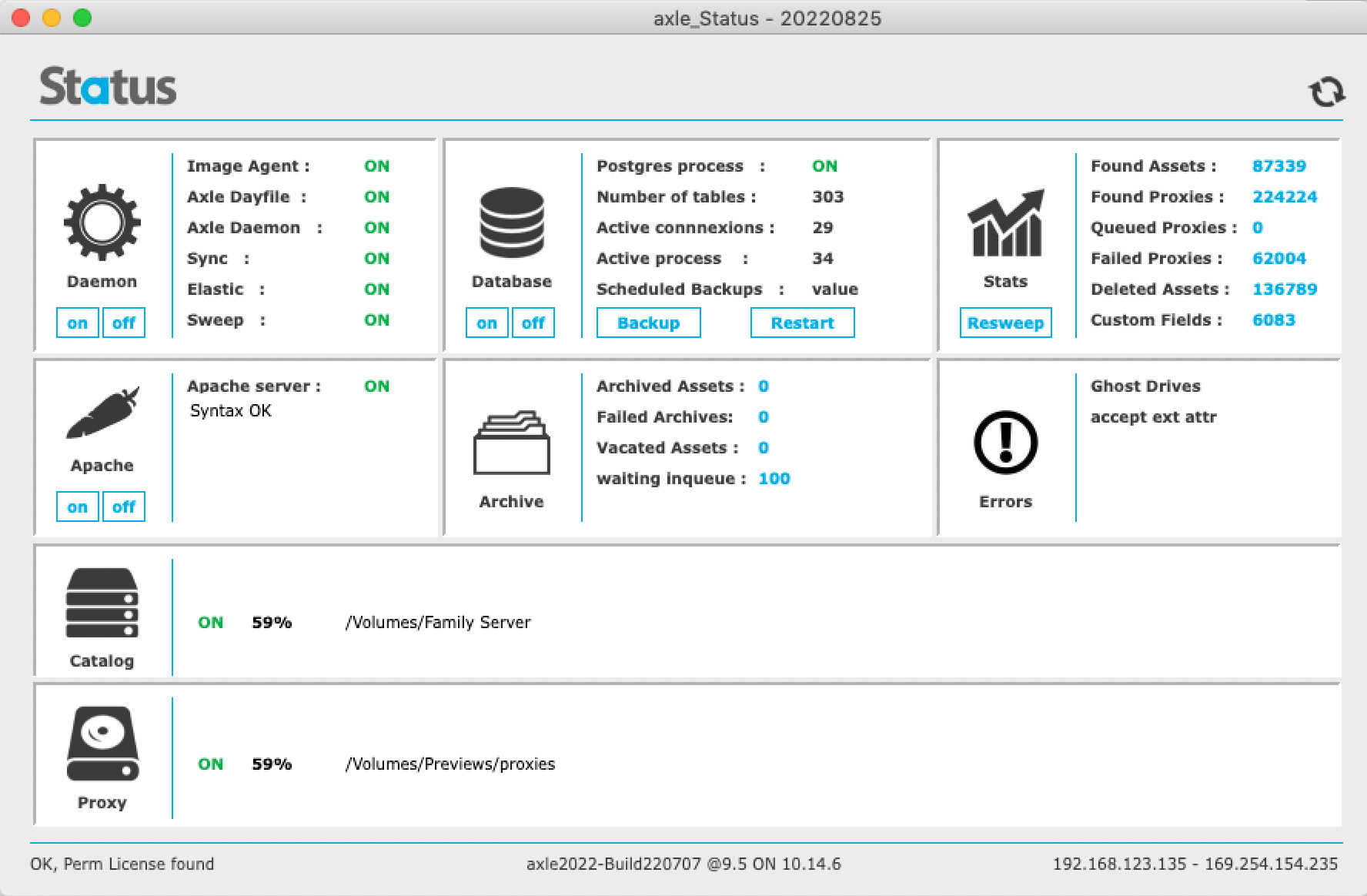

A feature in the Axle server that I use a lot is the Status Report. This shows the number of assets currently under management, along with the status of the entire system. I frequently check this to see the growing size of my library via “Found Assets.”

AXLE.AI INSTALLATION

Axle.ai is not available in the Mac App Store. Instead, it is installed on your computer remotely by the engineers at Axle. Installation, after downloading, can take an hour or two for configuration. My experience with Axle’s techs are that they are extremely well-trained and easy to work with.

Among other things, installation creates initial administration accounts, configures Axle.ai to know where media files are located and defines the location where proxy files (called “Previews”) are stored.

AXLE.AI CONFIGURATION

At heart, Axle.ai is a database for media. Like any database, there’s work to do to get it ready to use.

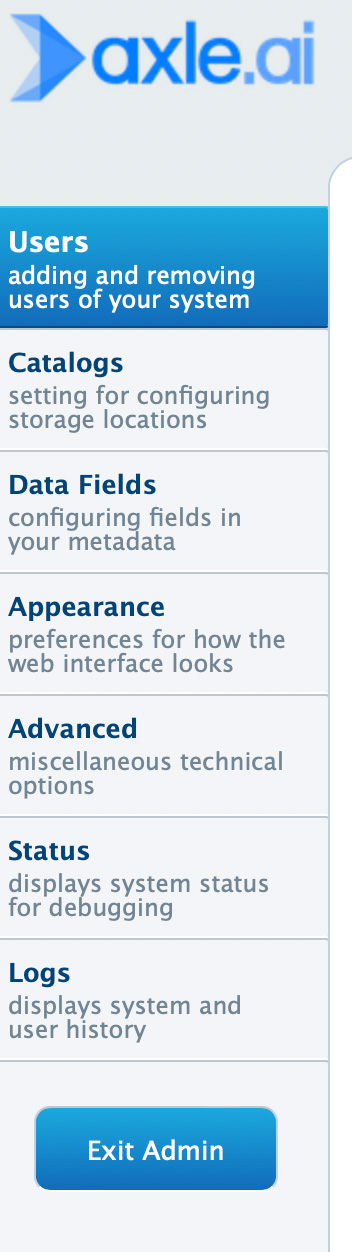

As an administrator, you can:

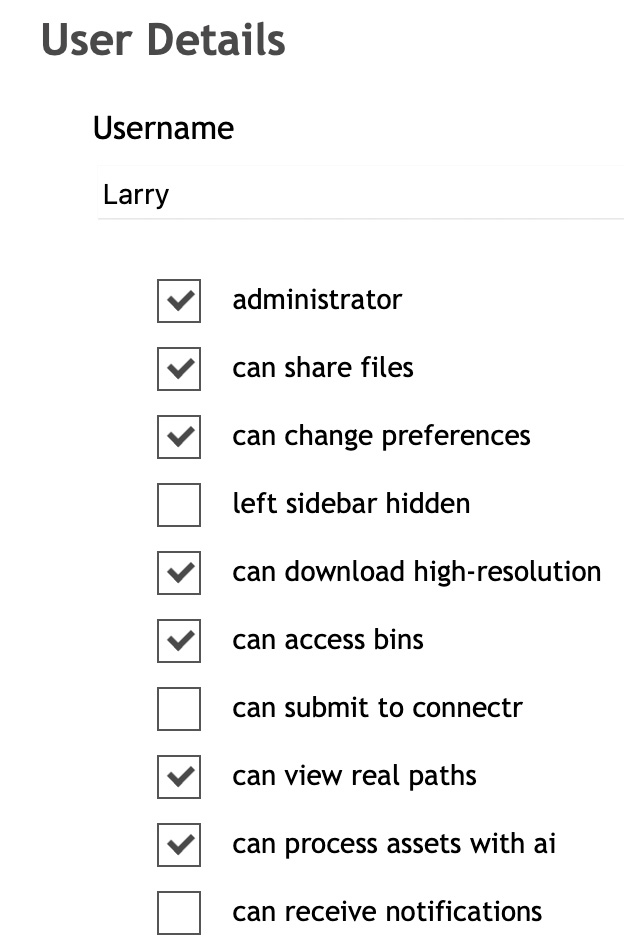

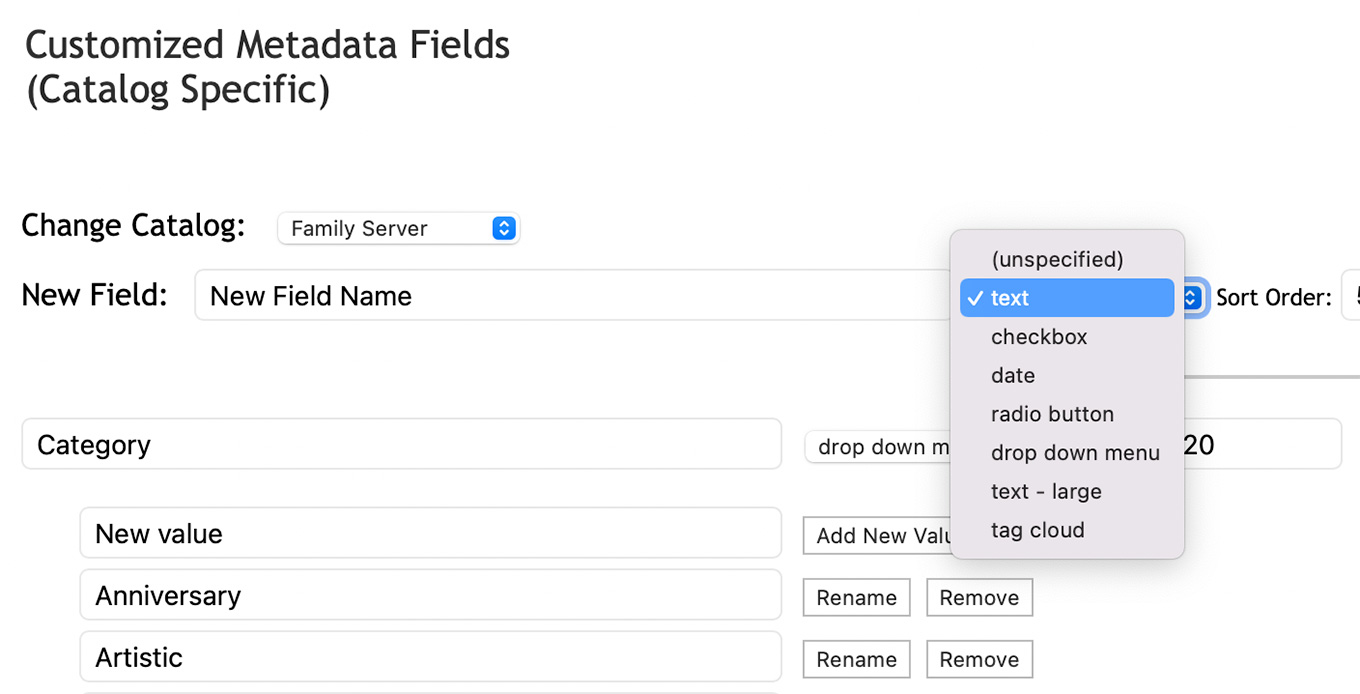

When creating a new user there are many options on what they can do within the system. These can be changed by an administrator at any time.

There’s no technical limit to the number of custom metadata fields that you can add to a catalog. (Catalogs are individual storage volumes scanned by Axle.ai, like a single hard disk, RAID, or server volume.) However, Axle recommends limiting custom fields to less than a few dozen, as it can get clunky to navigate/scroll through that many fields. Metadata created by speech-to-text transcription or facial recognition is searchable and shown as segments on the timeline.

Each custom field can be configured into one of seven data types. Text fields have an 8,000 character limit. New fields can be added at any time.

Truthfully, I’m still trying to figure out the best combination of custom fields to help me find the images I need without forcing me to spend hours entering data.

PREVIEWING STUFF

You access Axle.ai from a web browser on your local system. (You generally don’t access images from the server itself, though you can in a pinch.)

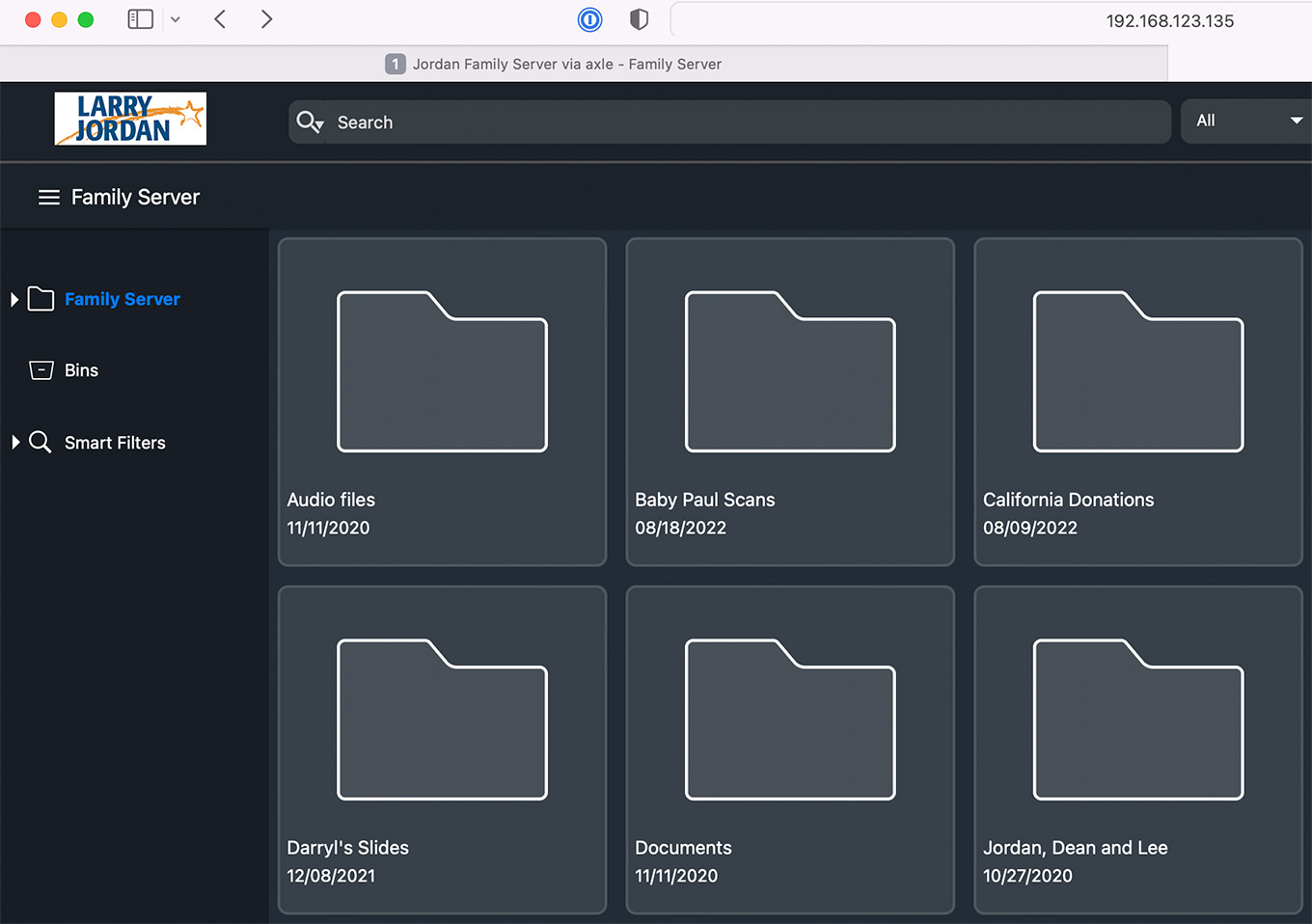

Type in the URL of the server to login and display the Axle home screen. Storage locations are displayed on the left, with icons for individual folders on the right.

Axle does not store your media, it simply points to wherever it is stored.

NOTE: A BIG benefit to Axle is that you don’t need to reorganize any media files. It catalogs whatever you’ve got wherever it’s stored. This means that spending time thinking about where to store files pays dividends once Axle starts cataloging. In my case, I try to store related files in the same folder.

Just as with any computer, double-click a folder to display previews of the contents.

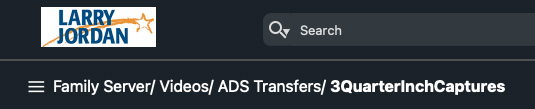

The “bread crumb trail” at the top left simplifies navigation.

Axle can preview just about anything. Hover over any video preview to skim it in review.

NOTE: Preview size can be modified using the Admin pages. Because my library is mostly stills, I set the preview size fairly large.

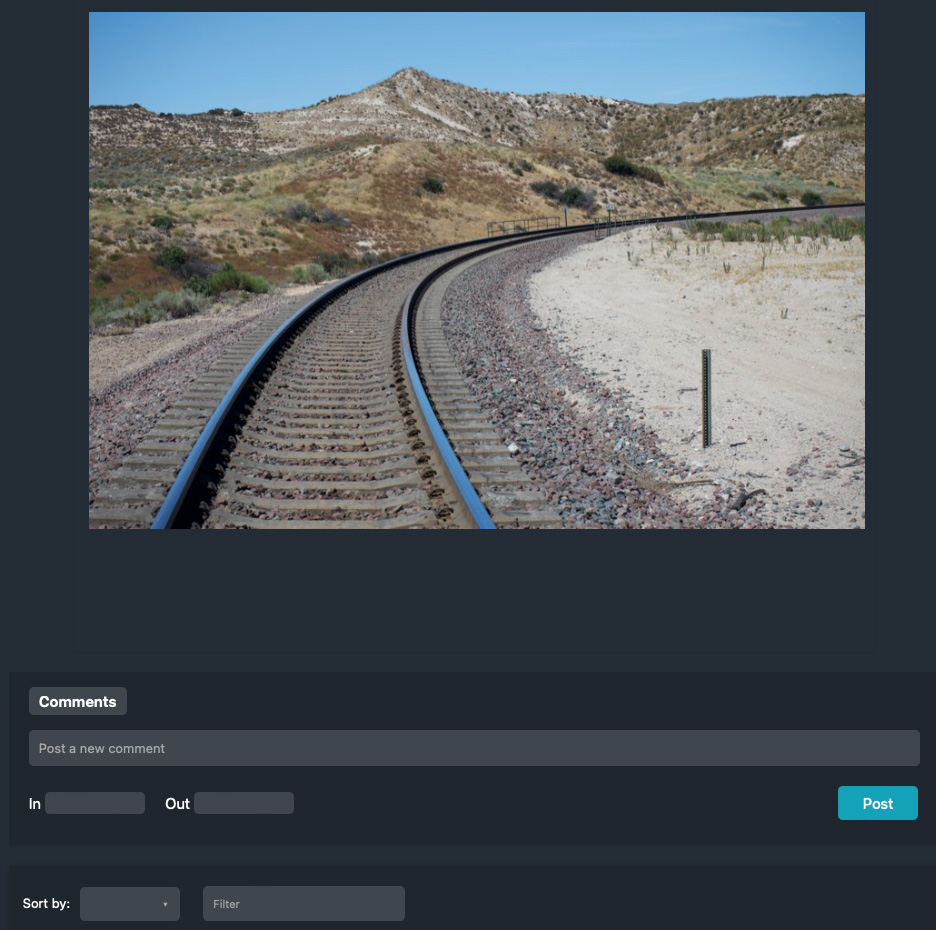

To see a larger preview, double-click its icon. This screen shows the options for a still image.

NOTE: Still image previews are limited in size to save network bandwidth. Download the image to view it at the highest quality.

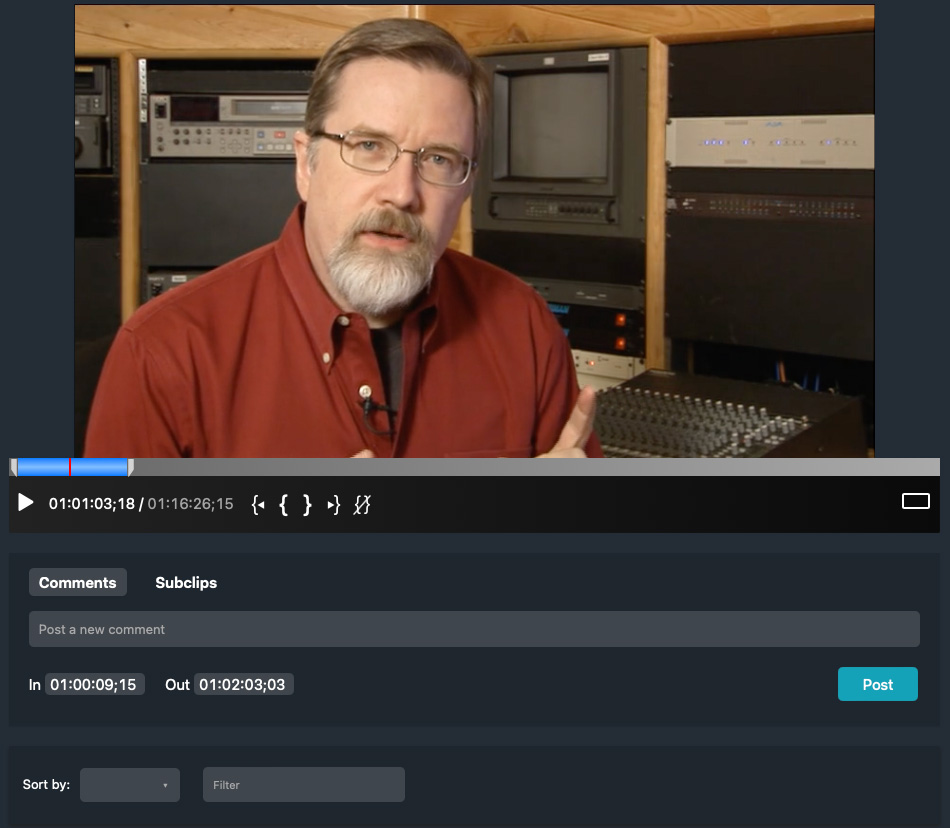

This screen displays a video preview. Axle can create subclips for editing, view the video at full screen or transfer it to your NLE for additional editing.

NOTE: Audio-only files can be previewed as well. Axle displays the waveforms and allows markers, subclipping and timeline metadata for audio files in much the same way it does for video.

CUSTOM METADATA

While Axle automatically creates a wide variety of searchable data, for example, file name, image size and type, format and so on, the real strength – but most labor intensive – of any DAM is adding custom metadata (labels) that define the contents of your media so you can find what you want when you want it.

The good news is that Axle is highly customizable. The bad news is that adding all this custom data to each image takes a LOT of time. But that would be true of any system, not just Axle.

UPDATE: After reading this review, Sam Bogoch commented: “Two things make this process much easier. One is that Axle supports batch creation of metadata, so you can enter metadata for up to 50 individual files at once, or even across a whole folder’s contents. This is a big time-saver.

“The other major enhancement is Axle AI’s new AI/ML capabilties, which include automated speech transcription, face recognition, object recognition and logo recognition. These are priced on a usage basis, but create a huge amount of timeline metadata automatically. This can make your media much more searchable without your having to invest a lot of time in manual tagging.”

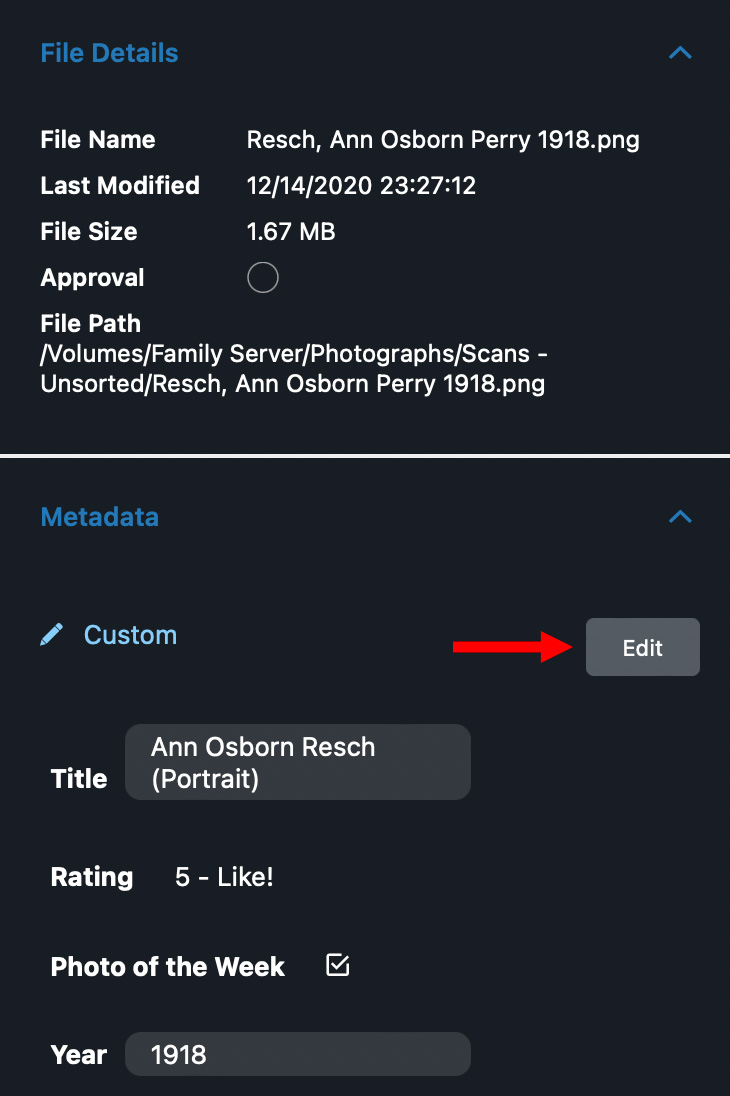

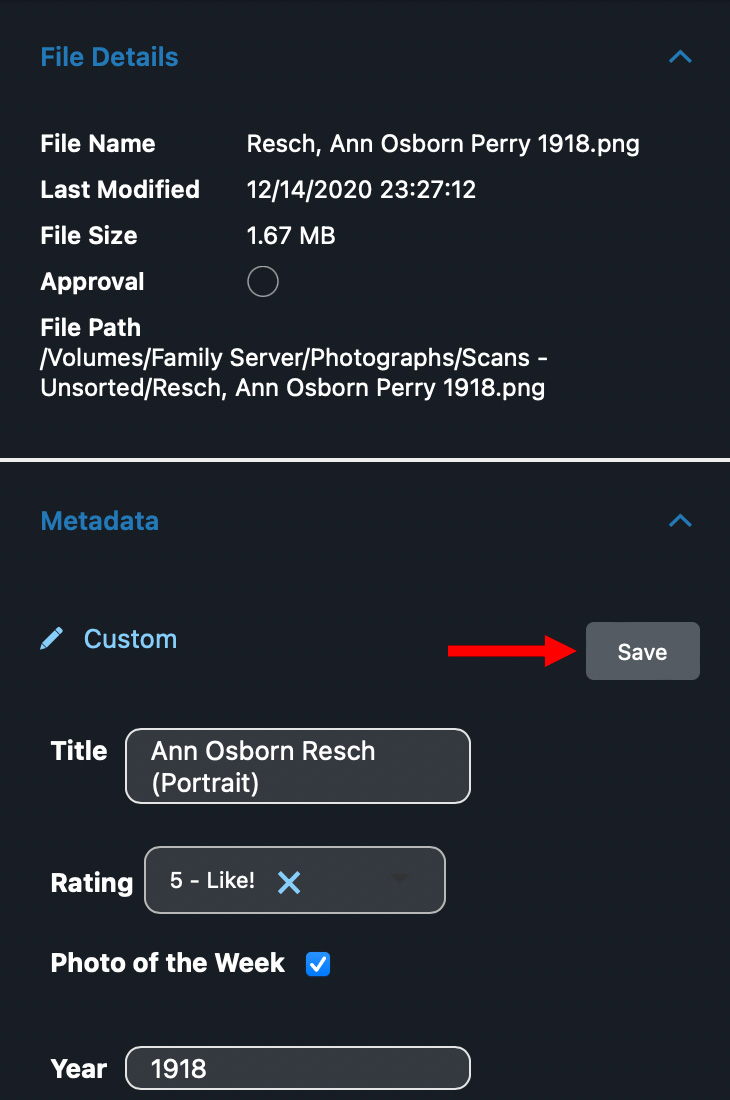

Metadata – both automatic and custom – is displayed in a sidebar on the right of the frame. If they don’t all fit, you can scroll up and down.

NOTE: Axle has a built-in three-stage approval process for every asset: Approve / Review / Reject.

To enter metadata, click the Edit button.

To save custom metadata, click the Save button.

NOTE: If you fail to click Save before navigating to another image, Axle pops up a warning giving you the option to save your work. However, this button doesn’t seem to work.

FINDING STUFF

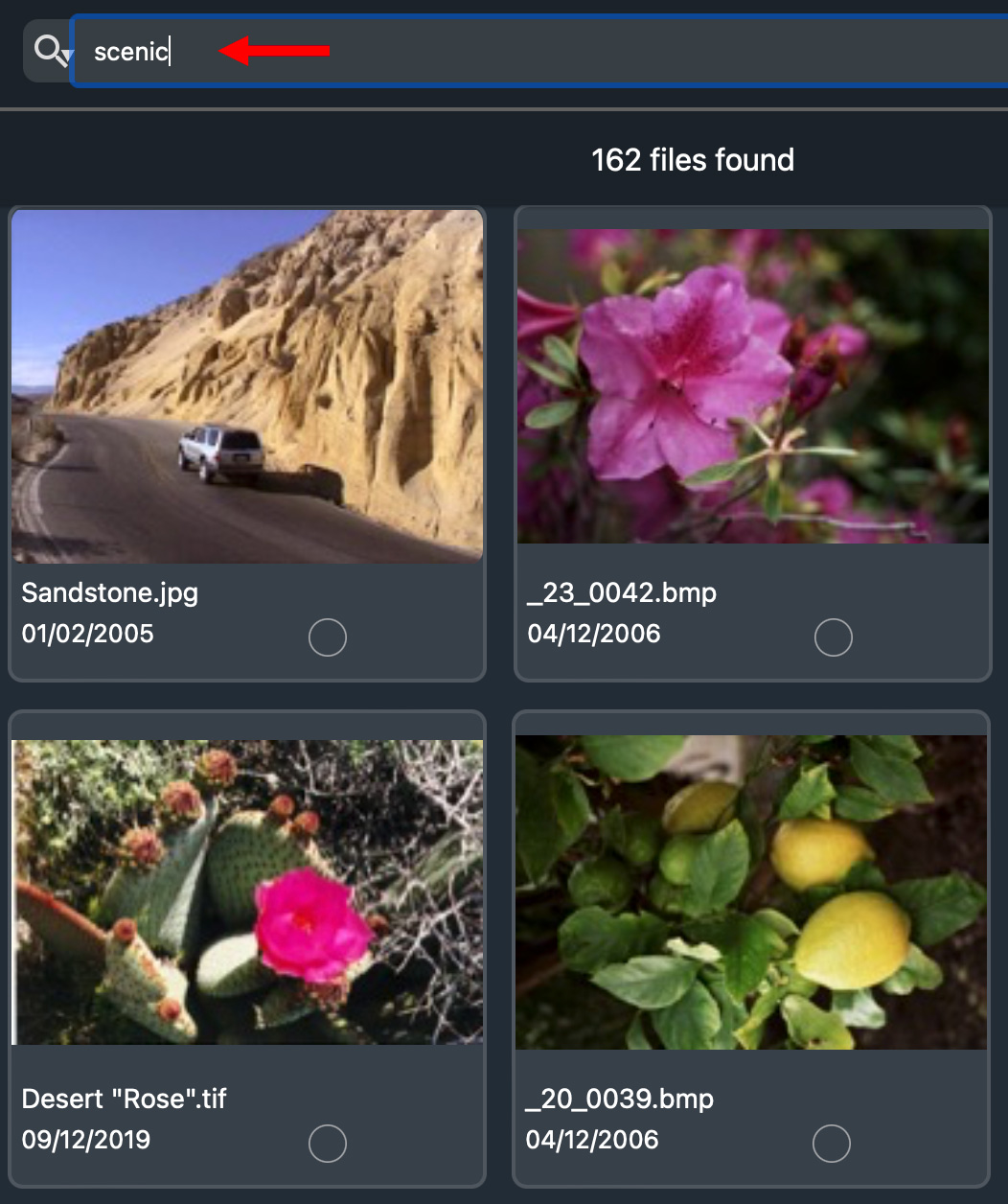

At the top of the window is the Find box. Enter a word you want to find and essentially instantly, all images that meet that criteria are displayed. Axle uses what’s called “Elastic Search,” similar to Google search, where you type in English-like words or phrases and it will translate that into specific search criteria.

NOTE: My system has close to 100,000 images in it and search results display within a second. Axle is VERY fast.

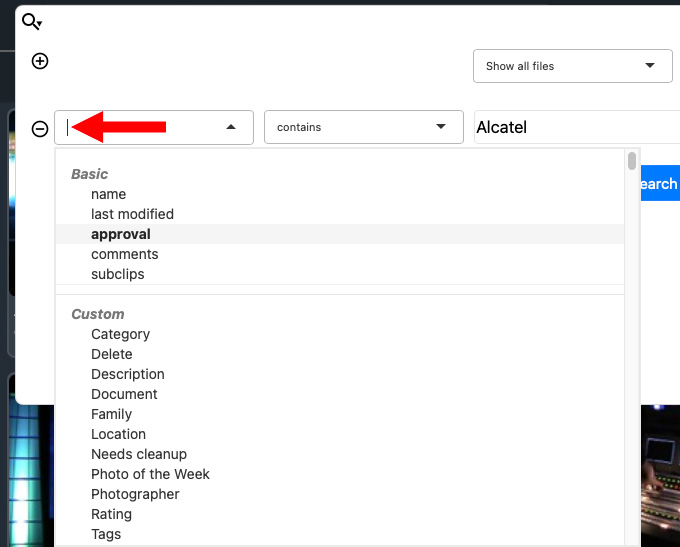

However, the real power of search is revealed when you click the Magnifying Glass icon. This opens up a search window where you can specify exactly what you want to search on.

Your ability to find stuff is directly dependent upon how you name and organize your source files and the metadata you assign to each file. It is worth hiring a free-lance librarian or archivist to get your system properly setup and set standards for data entry.

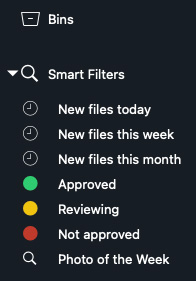

By default, Axle.ai has six “Smart Searches” to display the latest files or project status. However, while each user can create as many custom Smart Searches as they want these can’t be created on the Axle server and used out to all clients. If you need multiple users to access the same Smart Search, each search needs to be created on each user’s computer.

NOTE: As well, you can create custom bins to store subclips, and export them to your favorite video editing software. By default, these are shared between users.

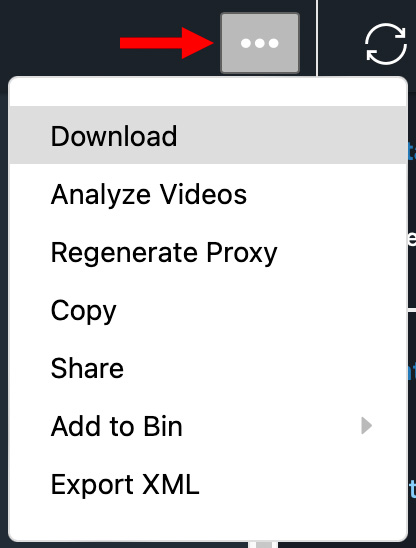

Once you’ve found a file, or files, you have a variety of things you can do with it. If the images are stored on a network volume and all users have access to that volume, you can quickly download the file to a local computer for editing or processing.

Files can also be drag-and-dropped into another application.

COOL THINGS I CAN’T DEMO

THINGS AXLE COULD IMPROVE

Here are several improvements I’d like to see Axle to make:

Website

Interface

SUMMARY

I really like how quickly Axle finds clips. Search is very flexible. And now that I have preview files set to a large enough size, I can easily see the contents of each image.

No software is perfect, but of all the asset managers I’ve looked at, Axle does a better job than anything else.

Axle.ai is a fast, powerful and customizable media asset management system that is designed for the needs of a small- to medium-sized workgroup. While requiring a dedicated server, its web browser interface easily handles searches through hundreds of thousands of assets without breaking a sweat. While optimized for video clips, it easily expands to handle still images. While the audio preview is not as sophisticated as SoundCloud, the ability to find and listen to audio clips is also a benefit. And its customization options are excellent.

INTERVIEW WITH SAM BOGOCH

As part of this review, I emailed Sam Bogoch, CEO of Axle.ai, several questions to learn more about Axle.ai.

Larry: How would you describe the current state of asset management software for individuals and smaller organizations?

Sam: It’s largely absent! Something like 99% of the video creators and teams who could benefit from media asset management (MAM) don’t have it.

So they rummage through hard drives, click through folders on network drives and waste hours of time trying to find content that should be visible right away.

Larry: How would you describe Axle.ai?

Sam: We’re pioneers in affordable, lightweight MAM software. We make your media smarter, and much easier to search and repurpose.

Larry: Who is your core market?

Sam: Video teams of 3 to 30 people, in a variety of settings: corporate, broadcast, sports, documentary, houses of worship, universities, and venues. It turns out that while these settings sound very different, the work done by these teams is remarkably similar. They capture content, they edit it, and post the edits to social media.

Larry: One of the strengths of Axle.ai is that is multi-user using a web browser for access. While it is easy for team members on the same network to access the Axle server, how can remote users access Axle?

Sam: We offer 3 options: first, a Reverse Proxy service at $60 per month that allows secure remote access. Second, our new axledit cloud collaboration tool allows broader remote access including shared timeline editing and review and approval. Finally, for the ultimate in security, customers can set up a VPN for maximally secure remote access.

Larry: Why isn’t the app available through the Mac App Store?

Sam: The App Store only supports very specific types of applications, which don’t use system functions to the degree that axle ai’s server does. We turn the Mac (usually a Mac Mini, Mac Studio or Mac Pro) into a webserver using integrated tools like Apache, Elastic Search and PostgreSQL. So while it’s a simple download and four-click install, it’s not eligible for the App Store (yet). Bear in mind that this only applies to our server software, which is available for Mac, Windows and Linux environments; the clients are nearly any web browser, laptop or mobile, and require no installation at all.

Larry: You and I have had long discussions on ways to improve the Axle user interface. What do you see as the essential information that an asset management system needs to provide?

Sam: People need to be able to find their content, in as intuitive and simple a way as possible. Several tools have come and gone which had a “geekier” approach to solving this problem, but our customers tell us we’re able to give them all the power they expect – and more, with our new AI/machine learning capabilities – without asking them to become techies or reach for a manual.

Larry: One of the biggest obstacles to asset management is the amount of time required to enter descriptive metadata. As an example, a library filled with books is useless without a well-indexed card catalog to find the book you need. How does Axle help us find assets by content?

Sam: This is a capability we’ve really built out over the last couple of years. In the axle ai 2022 release, you can search based on what was said (integrated AI transcription), who appears in the video (integrated AI face recognition), what objects appear in the video (integrated AI object recognition), and what logos appear (integrated AI logo recognition).

We provide these as built-in modules at a very affordable, predictable cost, unlike our competitors who only offer expensive per-hour tools from the big cloud vendors. With our approach, you can automatically tag all your media, and search it effortlessly. We strongly believe that by 2024, any video that you take the trouble to shoot should be AI-tagged in depth.

Larry: What flexibility exists to customize the interface?

Sam: There are several customization options, including fully configurable metadata fields, custom logos that can be set up per-client, and a “hide-able” catalog list section. For enterprise customers who can do their own web development, we also have a full REST API that we use to build our own user interface and plug-in panels, a practice known as “eating your own dog food”. Customers can access these APIs to build fully custom user interfaces if they prefer.

Larry: What other services does Axle provide to help us store and locate our media?

Sam: Our axle connectr worfklow tool is a real breakthrough in no-code automation of media workflows. It runs on Mac, Windows or Linux and lets you build very sophisticated automations without writing code. In addition, we have axle Speech services (a $1 per hour, highly accurate AI/ML speech transcription service), and axle Tags, our AI/ML platform for face, object. and logo recognition.

Larry: What does it take to get started with Axle? How far can we expand it?

Sam: 2-person teams can start at as little as $100 per month ($50 per user), or opt to purchase a perpetual license of various software configurations for $3,000-$10,000. Likewise, larger teams can opt for either SaaS pricing or perpetual license pricing. In either case, add-on services like axle Speech and axle Tags, as well as our Reverse Proxy service, are priced on a SaaS basis. Meanwhile, we also have large customer sites with hundreds of users and in some cases even sophisticated hub-and-spoke configurations of axle, with hybrid cloud deployments across servers in multiple geographies accessing petabytes of media.

Larry: If you were to describe Axle.ai to a new user in a single paragraph, what would you say?

Sam: It’s the simplest, most powerful way to make large amounts of media searchable.

Larry: Axle.ai pricing seems to be rental only. Does Axle still offer options to purchase the software?

Sam: Yes – we think it’s vitally important that if you own the media and want to own the metadata that goes with it, that you have the option of owning the software that houses and searches the metadata, and that all the metadata be easily exportable. Vendors come and go – in fact, dozens of our competitors have been acquired during the decade we’ve been in business – and your metadata, with growing value, is too important to trust to a SaaS platform alone. Over 90% of our customers purchase a permanent license for axle ai, and get add-on services like our axle Speech and axle Tags AI metadata, Reverse Proxy remote access service and Axledit browser-based collaborative editor on a monthly basis.

Larry: Are all media assets stored locally at the customer site, or does Axle provide a cloud service for storing assets in the cloud? If so, who owns cloud-based media and what is Axle’s privacy and data protection policy?

Sam: The majority of our customers have what I would call a hybrid cloud configuration, with large amounts of on-premise storage complemented by selective and growing use of cloud storage. We believe strongly that all content and metadata is our customer’s alone, and have built our whole business around this point of view.

Larry: Axledit, since Axledit is a cloud-based editing platform, what information does Axle track for users of Axledit and what does it do with that data? (The reason for the question is that your website compares Axledit to Google Docs and we all know that nothing in Google Docs is secure.)

Sam: Unlike Google, we don’t perform any searches across our users’ data. The searches of each user’s or group’s content are completely private to them, and are self-contained across their media and metadata.

Larry adds: Sam, thanks for sharing your time for this interview.

2 Responses to Product Review: Axle.ai Media Asset Management

[…] Industry expert Larry Jordan recently published an in-depth review of axle ai 2022 at https://larryjordan.com/articles/product-review-axle-ai-media-asset-management/ […]

[…] Industry expert Larry Jordan recently published an in-depth review of axle ai 2022 at https://larryjordan.com/articles/product-review-axle-ai-media-asset-management/ […]