[ Updated Nov. 19, 2023, with a speed correction for RAID 4 and 1+0]

[ Updated Nov. 19, 2023, with a speed correction for RAID 4 and 1+0]

With the advent of the M1 and, now, M2 chips from Apple, the performance of our computers has never been better. Editing any video format 4K or smaller is no longer a big problem.

Except… fast as our computers are, if we don’t have fast storage to go with it, much of that computing horsepower is wasted. It’s like filling a water wheel with a garden hose – it will work, but you’ll get much more work done if that water wheel is fed by a river.

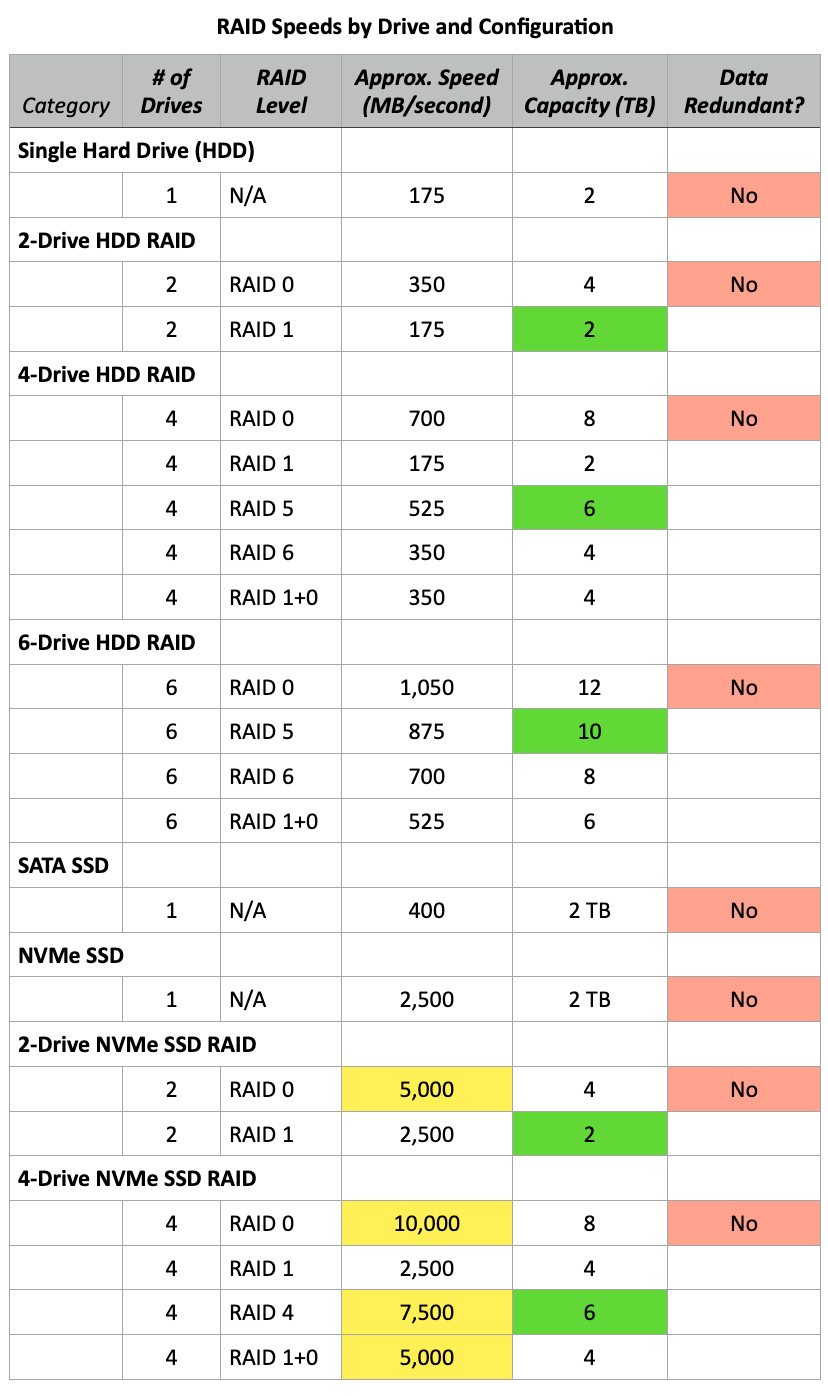

In the past we used hard disk drives (HDD) as our primary storage. They didn’t cost much, held a lot and transferred data pretty quickly; around 150-200 MB/second.

However, far too often, a single HDD wasn’t fast enough for large frame size or Log media files, so we combined multiple HDDs into a RAID (Redundant Array of Inexpensive Drives). This quickly increased capacity and transfer speeds up to about 1,000 MB/second depending upon the number of drives, how the RAID was configured and how full the drives are. But, these RAIDs suffered from latency which made multicam editing challenging. They were also noisy and kinda big.

Next came SATA SSDs (Solid State Drives). One of these puppies was about 3X faster than a single hard drive, transferring data around 400 MB/second. They were also dead quiet, small and stayed reasonably cool. However, they only used one channel of a PCIe bus, which limits how fast they can transfer data.

The latest iteration of SSDs is NVMe (Non-Volatile Memory express). These can, depending upon configuration, transfer data up to 3,000 MB/second. These, too, are small and quiet, but unlike SATA SSDs, NVMe can use multiple PCIe channels for both reading and writing.

However, while SSD speeds increased, a single SSD still holds far less than a single HDD. While SSD speed is outstanding, we often need to combine multiple SSDs into a RAID to get the storage capacity we need for editing media.

RAID LEVELS

Just as with HDD RAIDs, SSD RAIDS can be configured into multiple levels, from RAID 0 to RAID 1+0. These levels provide differences in performance and data security.

Based on my tests, RAID 0 is fastest, followed by RAID 1+0, then RAID 4. RAID 0 has the greatest capacity, followed by RAID 4, then RAID 1+0. Here’s a table of theoretical speeds that illustrates this.

NOTES:

USE THE RIGHT CONNECTION PROTOCOL

When connecting an SSD or RAID to your computer, it is important that you pick a protocol that matches the performance. This means Thunderbolt 3 or 4 or USB-C. Any other USB version will be far slower than your SSD, impacting performance.

NOTE: Thunderbolt 2 can also work, but has half the data transfer rate of Thunderbolt 3 or 4. If performance is your goal, use Thunderbolt 3 or 4.

MAXIMIZING PERFORMANCE

Thunderbolt 3 or 4 limit maximum data transfer speeds to 2,800 MB/second (2.8 GB/second). Since that’s close to the maximum speed of NVMe, I wondered why we would bother creating an SSD RAID in the first place.

For example, I was thinking of buying an OWC Thunderblade RAID, which combines up to four NVMe SSDs into a single unit. So, I contacted Tim Standing, VP of Software Engineering at OWC, to help me understand how to configure an SSD RAID.

DEFINING TERMS: A “blade” is a single SSD. A RAID contains multiple SSDs, generally four. The OWC Express 4M2 is an earlier stand-alone Thunderbolt chassis that contains up to four M.2 SATA or NVMe SSD drives. The OWC Thunderblade is a newer model stand-alone Thunderbolt chassis that holds up to four M.2 NVMe SSDs. All run on Mac and Windows systems. The OWC Accelsior is a PCIe card that plugs into PCIe slots on Windows or Linux PCs or the Mac Pro. Here’s a link to OWC’s SSD Raids.

Larry: A single NVMe SSD transfers data at almost 2.5 GB/sec. This approaches the maximum speed of Thunderbolt 3 or 4. What’s the advantage of creating a RAID of NVMe SSDs, when we can’t take advantage of the speed that a RAID of SSDs would create?

Tim: Yes, with NVMe M.2 blades it’s possible to approach maximum Thunderbolt speeds with a single blade, which means that RAID will offer minimal performance gains. However, most RAID levels (0, 1+0, 4, 5) also combine blades to increase the capacity of the volume, which is larger than any single blade.

Tim: Yes, with NVMe M.2 blades it’s possible to approach maximum Thunderbolt speeds with a single blade, which means that RAID will offer minimal performance gains. However, most RAID levels (0, 1+0, 4, 5) also combine blades to increase the capacity of the volume, which is larger than any single blade.

More importantly, RAID levels 1, 1+0, 4, and 5 also offer data safety features — if a single blade fails, your data remains safe. And if speed is critical, you can connect more than one enclosure, each to a separate Thunderbolt bus, to increase speed well above the performance of any single bus. This is a feature that isn’t possible with hardware-based RAID solutions.

Larry: Wouldn’t it be just as effective, yet far cheaper, to simply connect a single NVMe SSD to our computer than create an SSD RAID?

Tim: A single NVMe SSD would be effective for some customers, but the failure of that single blade could result in the loss of all data. As described above, a single blade limits potential volume size and performance.

Simpler is always better. If your entire working set of files fits on 2 or 4 TB and you back up every night, then one of our OWC Envoy Pro FX drives is the best way to go.

If you need more capacity or backup less frequently, you should probably be using a fault-tolerant RAID volume. Most of our customers are using the ThunderBlade or Flex 1U4 for installation on a DIT cart or one of the Accelsior 4M2 or 8M2 cards (see the next section) in a 2019 Mac Pro for audio or video editing or grading. VFX seems to be mainly done on Linux, and we don’t have much of a footprint in that market, although the Accelsior 4M2 and 8M2 work perfectly in Linux.

Larry: Why not sell the Express 4M2 with PCIe SSDs, which are far cheaper than NVMe, yet, when RAIDed together would totally fill the bandwidth of a Thunderbolt 3/4 pipe?

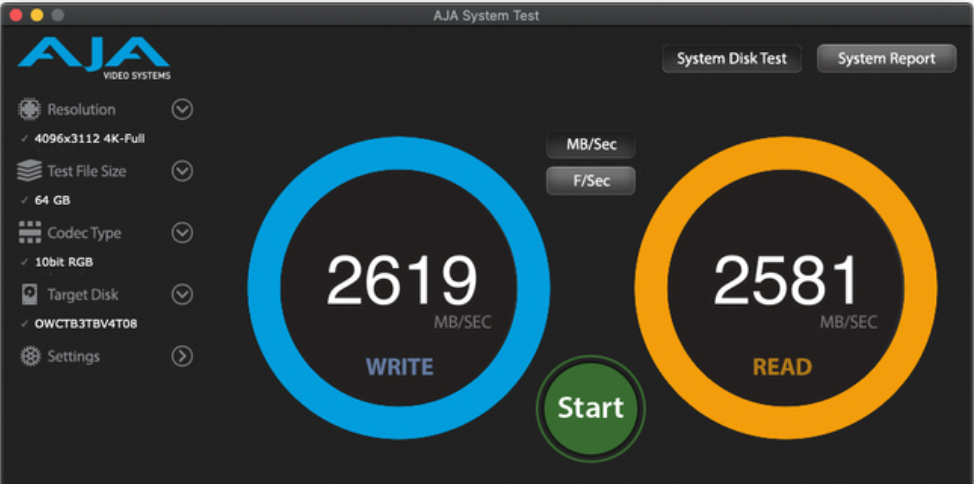

Tim: We have been selling these solutions for over a year, [in] the Accelsior 4M2 and Accelsior 8M2. The 4M2 is almost 3 times the speed of Thunderbolt at 6 GB/sec read and write on RAID 0 (as measured with AJA System Test). It is an 8 lane PCI Gen 3 card and will use all the speed available in the 2019 Mac Pro, which is also PCI Gen 3.

The Accelsior 8M2 is a real beast as it uses 16 lanes of PCI Gen 4. On a 2019 Mac Pro using AJA System Test, we see speeds of 12 GB/sec with a RAID 0 volume. This is significantly faster than a single NVMe blade. In addition, you can create a 64 TB volume, much larger than anything available now in a single blade.

If you install 2 Accelsior 8M2s, you can create a 16 disk volume which will sustain 17 GB/sec using AJA System test and if you are on a PC with PCI Gen 4 running Windows, you can expect to see 20 GB/sec from a single Accelsior 8M2.

Larry: My understanding is that RAID 5 is preferred for HDD media to provide maximum capacity and data transfer speed, while still protecting data in case one hard drive dies. What’s the recommended configuration for SSD RAIDs and why?

Tim: We recommend RAID 4 for RAIDs with SSDs. The implementation of the write code for RAID 4 and RAID 5 in SoftRAID is identical, there is never any speed difference. I did this to make the coding simpler which minimizes the chances of introducing bugs. This also means the read performance [of RAID 4 or 5] is exactly the same.

For reads, RAID 4 is always going to be faster than RAID 5 when the sum of the speed of all the individual storage devices is faster than the speed of the bus they are connected on. For example, let’s take a ThunderBlade, 4 NVMe blades connected over Thunderbolt. Each blade can transfer at 975 MB/sec (the Thunderblade uses only one PCI lane per blade), so the aggregate speed of the 4 blades reading at the same time is 4 * 975 MB/sec = 3.9 GB/sec, but the total storage bandwidth of Thunderbolt is 2.8 GB/sec. This means the blades can always supply data faster than the Thunderbolt bus can transfer it.

Remember that a RAID 4 volume has a dedicated parity disk whereas RAID 5 spreads the parity data over all the disks. So if I read from a 4-drive RAID 4 volume, I can just skip the parity data and read from the 3 data disks, 100% of the data I read is file system data. With the same drives configured as RAID 5, I have to read from all 4 drives and then discard 25% of the data, the parity data which I don’t need unless one of the drives has failed. This means that only 75% of the data I read is file system data and my expected performance is 75% of the total data transfer rate from the drives. This means that a RAID 5 volume [for SSDs] should be about 75% of the speed of a RAID 4 volume created with the same drives. In practice, we see that it is about 80%.

One quick note about all the speed numbers I have used above. These are the theoretical maximum numbers and don’t take into account things like driver latencies, file system overhead and the time it takes each NVMe blade to respond. So while, you won’t necessarily see 2.8 GB/sec over Thunderbolt, the relationship between RAID 4 and RAID 5 performance is something we see every time we test in our lab.

Larry: Let’s say, for cost reasons, that we decide to populate this enclosure with 2 TB SSDs. Can we upgrade the storage later to 4 TB (or larger) chips as they come down in price? (We can do this now with spinning media by replacing each drive one at a time with a larger drive, allow the RAID to rebuild, then replace the next drive. SoftRAID will automatically access the larger storage space. Does that same procedure work for SSD RAIDs?)

Tim: Yes, the same procedure would work with SSD RAID volumes. It will work fine. We were held up for a while with resizing APFS volumes due to a bug in macOS which prevented resizing APFS volumes. Apple included this fix in macOS 13, we have tested it and their fix works correctly.

SUMMARY

In the past, we used HDD RAIDs to get faster performance, along with greater storage capacity. The rules are similar with SSDs, except that, while the storage is less than with HDD RAIDs, the performance is far, far faster. Just remember to format an SSD RAID as RAID Level 4, wherever possible,.

15 Responses to Maximize Performance With an SSD RAID

Larry, Your timing is perfect. Since upgrading my MacPro (2013) to the Mac Studio a few months ago, I’ve been (slowly) researching SSD RAIDs are almost everything I’m getting now to edit is either UHD, 4K, or 5K. The Mac Studio definitely does a better job than the MacPro did, but we all ‘feel the need for speed”.

This article really helped (especially the RAID 4 explanation) me determine the best solution for my needs.

By the way, FCP 10.6.5 and Ventura have been working GREAT together…

Don:

Thanks for your comments and the report on FCP working with Ventura.

Larry

I’m echoing the comment above Larry, your timing is perfect, I’m also upgrading to a Studio PC soon. I would also add that I looked at a model of OWC’s SDD drive boxes which uses easily swappable drives – so I can use one box for a long time, use swapped out drives for archive, and use larger capacity drives as they become available… Thanks agin for this, I’m an avid follower of your posts…

Rodney:

Thanks for your kind words. However, I would urge caution in your planning. You use SSDs for their speed, then use HDDs for archiving. HDDs hold more and hold it longer than SSDs. Buy SSDs for performance, and HDDs for capacity.

Larry

Got it and thank you for this as well! 🙂

Can we make 4 2.5″ SSDs and 2 NVMe SSDs with single raid controller?

Kindly tell me which raid controller will be best?

Ifra:

Maybe. If all devices can be mounted to the desktop at the same time, the answer is probably yes.

Look into SoftRAID from OWC – http://www.macsales.com

Larry

Larry,

I have been using a Drobo 5D raid setup for years now and really love how it all works and is easy to manage. However, I’ve been getting a Legacy System Extension warning from my Mac about the software becoming incompatible with a future version of macOS. I figured Drobo would roll out an update to address this but I found this on the Drobo home page:

“As of January 27th, 2023, Drobo support and products are no longer available.

Drobo support has transitioned to a self-service model. The knowledge base, documentation repository, and legacy documentation library are still accessible for your support needs.

We thank you for being a Drobo customer and entrusting us with your data.

“

I guess I missed the boat on this one. Sounds like I need to start shopping around for an entirely new backup system if you have any recommendations for someone coming from a very much plug and play friendly Drobo eco system?

(We actually have 2 Drobo 5Ds with the maximum storage space allowable. One is setup in Raid 5 and the 2nd Drobo is a clone that I use Software: Carbon Copy Cloner to periodically run a full blown copy)

Brian:

Yup, Drobo’s boat has sailed and left the rest of us on the shore.

Any RAID from a reputable manufacturer – OWC, LaCie, Promise – can do what the Drobo did – and faster.Drobo was known for the ease of expandability – and it is unique like that. However, any RAID can be expanded, it just takes a bit longer.

My suggestion is to buy the storage you need plus a bit more to grow into.

Larry

Two issues/questions:

1) Why aren’t the reads on RAID5 happening in parallel?

2) If the parity isn’t read then how it verify the data is good?

Shannon:

Two good questions.

1. My understanding is that both reads and writes happen in parallel. However, the data is different going to each drive. This is how a RAID is able to achieve the speeds it does.

2. My guess, because I’m not a programmer, is that parity verification is looking at sectors on the disk, not the files on the disk. If the sectors match, by definition, the data is good. This means parity checking can occur whenever the RAID is not busy reading or writing. (“Sectors” is a spinning media term, but the concept is the same for SSDs.) Parity checking verifies hardware and data integrity, not file integrity. Again, this is my understanding. A programmer would have a more detailed explanation.

Larry

Hi Larry,

I found your article because i’m going to implement a solution with Flash drives on the company, and i was research about the best RAID solution. The RAID 4 in fact is quicker than RAID 5 if most of your tasks are Read from Disk. But if you have a lot of Writes into the RAID, for what i understand, the spare parity disk can’t write data from multiple disks at same time, so they need to wait for the the first write the parity data. This will lead to a poor writing performance compared with RAID5/6.

Did you made any speed tests with RAID 5 on NVME SSD Raid ? Can you share it ?

For what i understand the RAID 5 problem is that you will be writing parity data all the time to all the disk and this could reduce the lifespan of the SSD’s, if they are not Enterprise grade.

Thank you for sharing your thoughts.

Joao:

Thanks for writing. First, yes, I did testing comparing RAID 0, 1, 4, 5 and 10 using a ThunderBlade NVMEe SSD RAID from OWC. You can read my article here:

https://larryjordan.com/articles/review-owc-thunderblade-ssd-raid-really-fast-but-not-as-fast-as-you-imagine/

However, what is even more important than RAID level is how the device(s) are connected to your computer. The current speed limit for Thunderbolt 3/4 is 2.85 GB/second. (The rest of the bandwidth is reserved for monitors and can’t be used for data.) The current maximum speed of a single NVMe SSD is, roughly, 3 GB/second. So, if you connect multiple NVMe SSDs in a RAID, you won’t see a speed improvement because Thunderbolt limits you.

The workaround is to connect each SSD to its own Thunderbolt channel. On a Mac, one Thunderbolt channel spans two ports. However, most Macs only have two thunderbolt channels which rules out RAID 4 or 5 which require a minimum of three drives. (I can’t speak for PCs because I don’t use them.)

The other problem is that many NVMe SSDs limit bandwidth from each drive to minimize heat. This works because the total bandwidth of the entire RAID is limited by Thunderbolt. This is the technique used by OWC.

The real benefit to an SSD RAID is not speed, but storage capacity.

So, as you look into your operation, look first at overcoming bandwidth restraints. After that, the difference between RAID 4 and 5 becomes much less significant.

Also, ALL SSDs “wear out” from excessive writes, even Enterprise units. What Enterprise units do to compensate is that they overprovision the drive with spare cells so as they wear out, they have extras on hand to replace them.

Larry

Great thread! Thanks Larry. I started with SCSI RAIDS on RAID 3 then SATA RAID over 5 or 10.. which were great systems with incredible parity check-sum reliability. I have read & watched users discuss NVMe/SSD RAIDS which have highly questionable data parity processes. Either relying on the SSD to report data errors and even re-writing the parity data in favor of the corrupted data. I’m now digging for deeper analysis of NVMe SSD RAID reliability vs. raw speed specs and there is very little out there. Any sage experience in this regard?

Jon:

I have not run into any specific news or issues regarding parity. My conversations with engineers at OWC tell me that RAID 4 is optimized for SSDs by storing all parity data on one drive – which simplifies separating parity data from “good” data, thus increasing the speed of the unit. My test results bear this out.

Just as with HDD RAIDs, RAID 0 is fastest. However, I haven’t read anything which says parity data on SSDs is unreliable.

Larry